A Definition Updated for the AI Compliance Era

Allow me to attempt a definition of a thing I have observed for two decades, across hundreds of corporate environments, and which has just experienced its largest growth cycle in living memory. The policy.

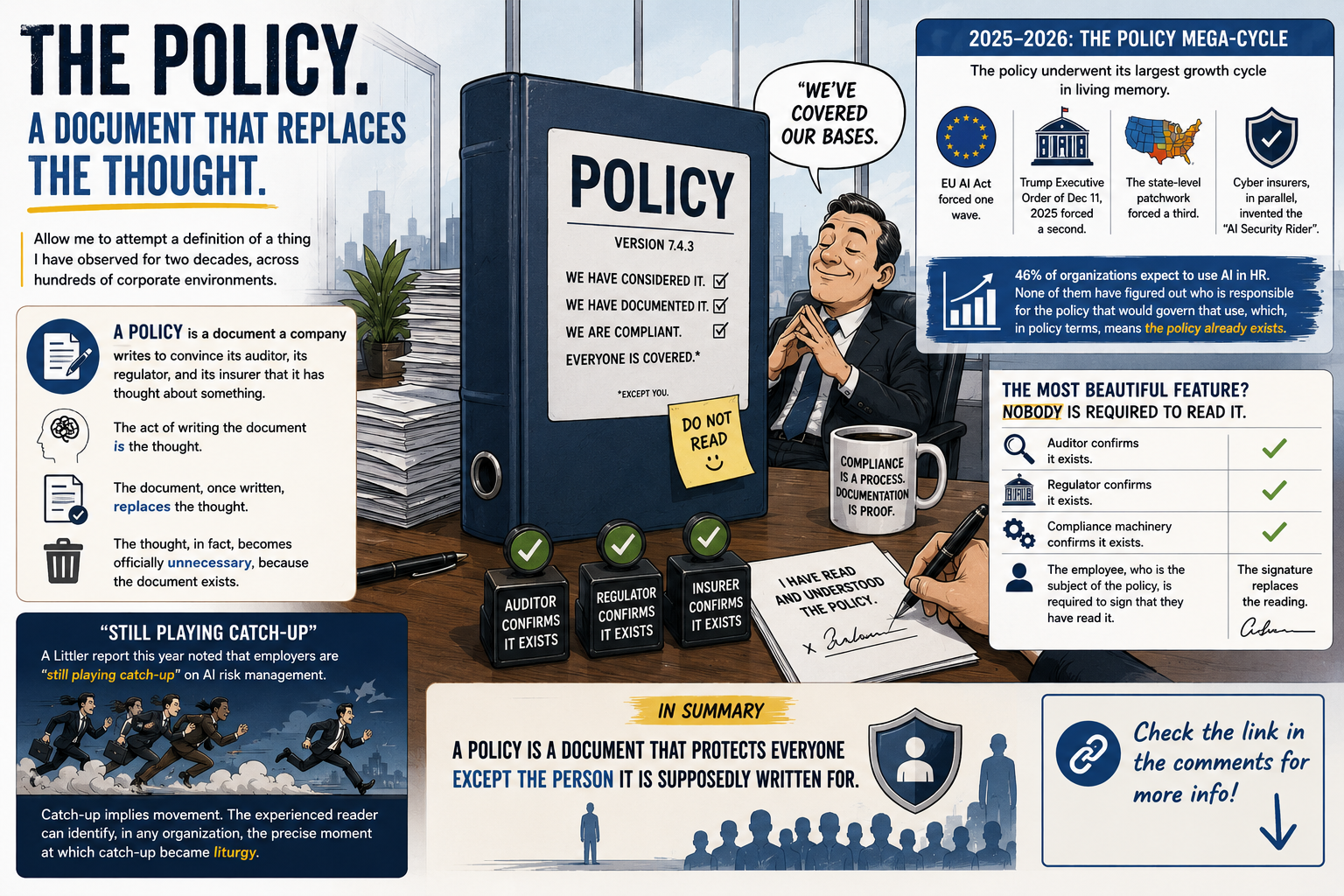

A policy is a document a company writes to convince its auditor, its regulator, its insurer, and its own legal team that it has thought about something. The act of writing the document is the thought. The document, once written, replaces the thought. The thought, in fact, becomes officially unnecessary, because the document exists.

This sounds like satire. It is also, on closer reading, a functional description.

The Classical Function of a Policy

In the classical theory, the policy is a tool of governance. It states the principles by which an organization commits to act. It is the upstream document from which procedures, standards, and operational practices derive. It is read, in the classical theory, by the people whose behavior it is supposed to shape.

In the practice, the policy is read by approximately five people in the organization: the policy owner who wrote it, the compliance officer who indexed it, the auditor who verified its existence, the legal counsel who reviewed its phrasing, and the executive who signed it. The classical theory’s “people whose behavior it shapes” are, in the practice, not on the reading list.

This is not a recent phenomenon. The asymmetry between policy-as-document and policy-as-behavior is, as best as I can date it, late 1990s. What is recent is the volume.

The 2025–2026 Policy Production Cycle

The policy industry, in the last eighteen months, has had its largest growth cycle since the post-Enron Sarbanes-Oxley wave of the mid-2000s.

The EU AI Act, with its August 2, 2026 compliance milestone for high-risk AI systems, forced one wave of new policies across every European employer. The Trump Executive Order of December 11, 2025, titled “Ensuring a National Policy Framework for Artificial Intelligence”, forced a second wave across U.S. federal contractors and indirectly across their supply chains. The state-level patchwork in the United States, with New York, Colorado, Illinois, Utah and California each in different stages of their own AI laws, forced a third wave specific to state jurisdictions.

In parallel, the cyber insurance carriers invented something called the “AI Security Rider”, a coverage condition that requires the insured organization to document, in policy form, its AI-specific security controls. This produced a fourth wave, this time driven not by the regulator but by the underwriter. The underwriter, it should be noted, reads the policy more carefully than the regulator does. The underwriter actually loses money if the policy is fictional.

A SHRM survey reports that 46 percent of organizations expect to use AI in HR functions in 2026. None of them have yet figured out who, exactly, is responsible for the policy that would govern that use. Which, in the contemporary policy environment, means the policy already exists. The “who is responsible” question is, structurally, downstream of the policy, not upstream.

The Signature-as-Reading Phenomenon

The most beautiful feature of the contemporary policy is that nobody is required to read it.

The auditor reads it, confirms it exists, and certifies the existence in a separate document.

The regulator reads it, confirms it exists, and acknowledges receipt in a third document.

The insurer reads it, confirms it covers the underwriting criteria, and prices the premium accordingly.

The compliance machinery, in aggregate, reads it.

The employee, who is the subject of the policy, is required to sign that they have read it. The signature is, in the contemporary practice, structurally equivalent to having read it. The signature is the policy’s interaction with the actual human population it governs. The signature happens. The reading does not.

This is not a corner case. It is the median case. Surveys of large employers consistently show that the median employee can name fewer than three of the policies they have signed in the previous twelve months. The median manager can name fewer than five. The median CISO, asked the same question about cyber policies, can name a few more, but rarely all of them.

The Enforcement Gap

Enforcement is the part of the policy lifecycle where the document meets reality. It is, by industry consensus, the weakest part.

A Littler report this year noted that employers are “still playing catch-up” on AI risk management. The phrase deserves attention. “Catch-up” implies movement. The phrase concedes that the policy exists, that the employer has filed it, that the existence has been certified, and yet the operational reality remains behind. The phrase smuggles, in two words, the entire structural pathology of contemporary policy practice.

The New York State Comptroller, in a December 2025 audit of the New York City automated employment decision tool law, documented that enforcement faces “practical challenges”. The phrase is, in policy translation, the same thing as the Littler observation, in a different jurisdiction. The law exists. The policy exists. The signature exists. Nothing else exists.

The Inversion

A policy, in its classical theory, was meant to bind behavior to a principle. In its contemporary practice, the policy binds nothing. It documents the principle. It documents that the principle has been documented. It documents that the documentation has been signed. It does not document that the behavior has changed.

This inversion is the operative reality of corporate governance in 2026. It is not, importantly, a malicious inversion. Nobody designed it. It emerged, over thirty years, from the cumulative interaction of audit requirements, regulatory expectations, legal risk management, insurance underwriting, and HR documentation practice. Each of these layers, individually, has a reason to want the document. None of them has a reason to want the behavior.

The behavior, in fact, is the one thing in the entire system that has no specific advocate. The CISO advocates for the technical control. The compliance officer advocates for the documented evidence. The legal counsel advocates for the language. The HR function advocates for the signature. The behavior of the employee, who is the subject of the policy, is downstream of all of them, and is, in the typical organization, not measured.

A policy is, in summary, a document that protects everyone except the person it is supposedly written for. It protects the auditor, who has done their work. It protects the regulator, who has confirmed receipt. It protects the insurer, who has priced the premium. It protects the executive, who has signed. It protects the legal counsel, who has phrased it carefully.

The employee whose behavior the policy was, in the classical theory, supposed to shape, is the one party in the system whose interaction with the policy ends at the signature. The classical theory predicted that the signature would be followed by the reading, which would be followed by the changed behavior. The contemporary practice has dispensed with the second step, and the third is consequently optional.

A serious organization would treat the gap between signature and behavior as the primary indicator of policy effectiveness. It would measure the gap. It would publish the measurement. It would, on the basis of the measurement, redesign the policy, the training, the enforcement.

We are, demonstrably, not yet that serious organization. The next AI policy wave, when it arrives in the August 2026 EU AI Act milestone, will be signed by the same employees who signed the previous one, and the behavior, in the typical organization, will remain unchanged.

The policy, meanwhile, will have a beautiful binding.

All my “insane” books on cybersecurity and governance are here 👉 https://www.amazon.it/stores/author/B0FB47T6Q4/allbooks